IMDRF just opened consultation on its AI Medical Device Lifecycle Framework (N93), and if you build AI-enabled medical devices, this document will shape your 2027 operating reality.

IMDRF does not regulate anyone directly, but FDA, EU, MHRA, PMDA, TGA, and ANVISA all use its output as the template for their own guidance. Whatever goes final becomes the global baseline within 12 to 18 months.

Here are the four most critical takeaways for manufacturers.

Your LLM provider is now officially a SOUP supplier

Foundation models (GPT, Claude, Gemini) are treated as Software of Unknown Provenance under IEC 62304. You need supplier credibility assessment, version history, update cadence, and support commitments — all documented and audit-ready.

Third-party model drift is now a named post-market risk

"Performance degradation due to changes in third-party, general-purpose models" is listed explicitly. When a provider ships a new checkpoint, that is a regulatory event for your device. You need to monitor it and catch it.

Customizable vs. Adaptable needs to live in your QMS

Customizable means tunable within pre-approved bounds. Adaptable means the algorithms themselves can change. Section 5.6 requires fundamentally different monitoring regimes for each. One post-market plan will not cover both.

Red teaming is expected

Section 5.4.1 names adversarial red teaming as a valid robustness-validation method. For high-risk AI devices, this becomes the norm and should be included in your verification and validation plan.

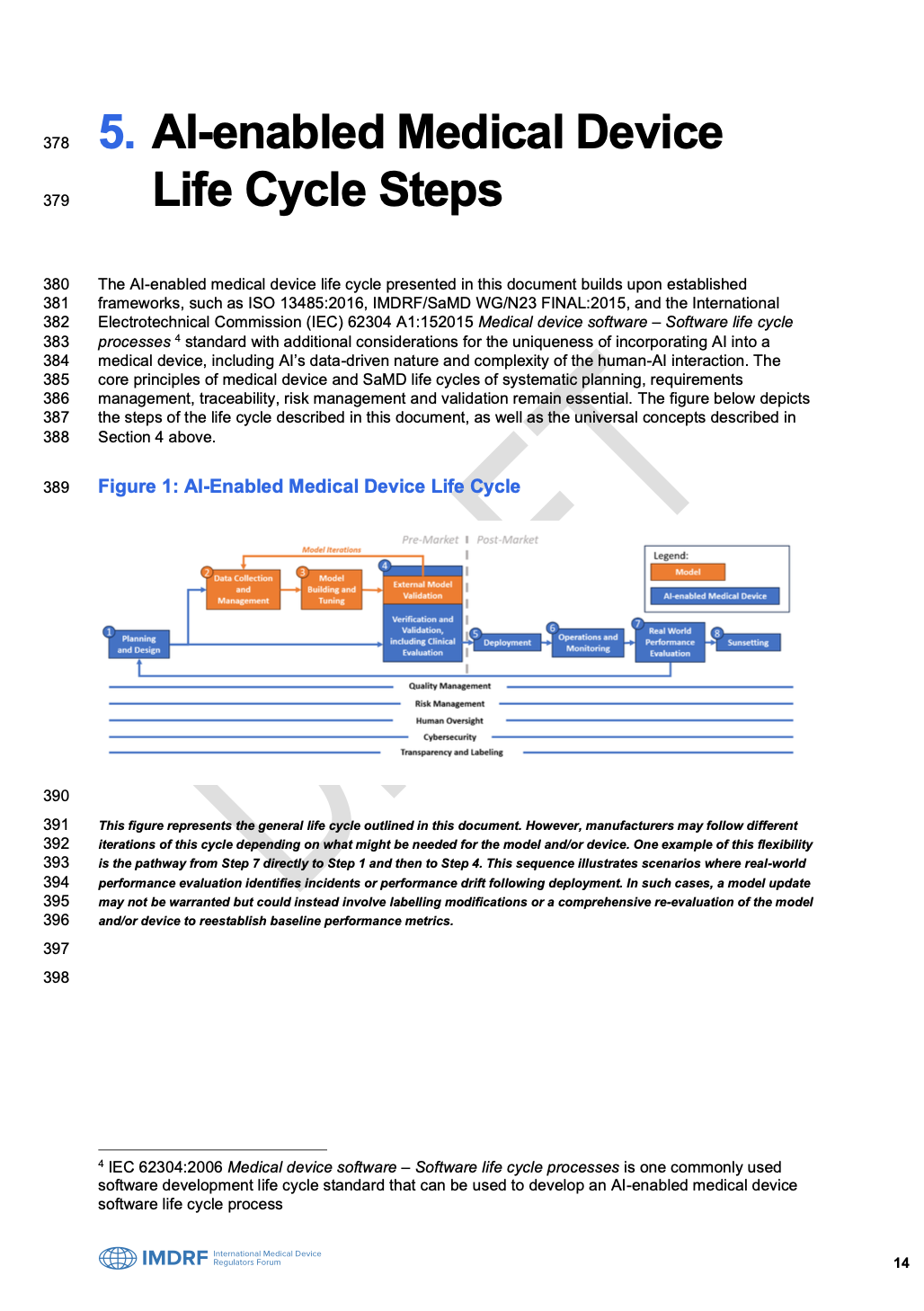

Consultation closes 10 July 2026 via the IMDRF Consultation Hub on Citizen Space. You can submit a comment or make a real case — either one shapes what your regulator enforces in 2027.